In this post, I want to show a test result of object storage services provided by DigitalOcean, Linode, and UpCloud. The result shows the average durations required to download/upload files from/to their cloud infrastructures. But, before we go into the test detail, let us overview the user features provided by each cloud provider.

DigitalOcean has the most advanced features compared to the rest. By utilizing its user dashboard, we can set a custom domain for the CDN of object storage, customize permission per object, and custom CORS headers. UpCloud has a more straightforward interface but with a unique concept for creating separate bucket domains (directories) in a single object storage subscription. Linode has the simplest features in its interface but it provides CLI tools for managing the buckets and stored objects.

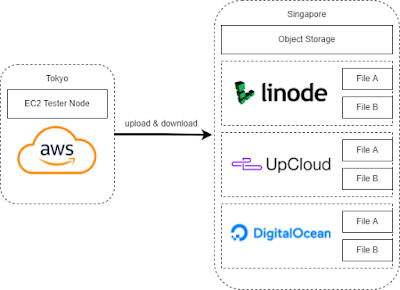

Now, let us go back to the main topic. For the test, I deploy an AWS EC2 server based in Tokyo as a tester node. Then, I set separate object storages on DigitalOcean, Linode, and UpCloud based in Singapore. I choose different regions to bring up more transmission delays and check their delivered quality of services across regions. The following image shows the test environment.

|

| Test environment |

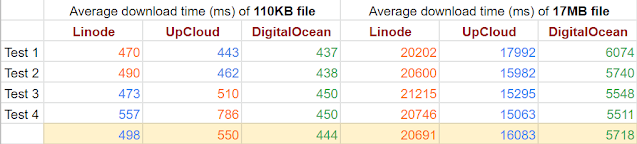

I performed the test four times. Each test consists of downloading and uploading process five times each. Each process is applied for two types of files, the image that represents the small file (110Kb) and the PDF that represents the large file (17Mb). So, each provider is tested to store 40 files and to stream back also 40 files in total. The table below shows the result.

|

| Average download time |

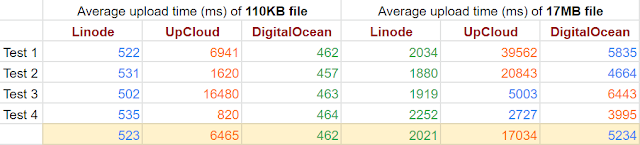

|

| Average upload time |

It shows that DigitalOcean has more promising results in the download test, while Linode takes the longest time in downloading large files. In the upload test, UpCloud seems to have an unstable performance, while Linode is the fastest for uploading large files and DigitalOcean is the fastest for uploading small files.

Comments

Post a Comment