Terraform is a tool to help us deploy infrastructures on any cloud provider such as AWS, GCP, DigitalOcean, and many more. Unlike Amazon CloudFormation which is specific only for AWS, Terraform supports many cloud providers found in Terraform's registry. It uses a domain-specific language built clearly for provisioning and configuring infrastructures named HCL or HashiCorp Configuration Language.

Meanwhile, UpCloud is an alternative cloud provider for SMEs. It targets a quite similar segment to DigialOcean and Linode. It provides a variety of popular solutions in the cloud such as managed Redis database, S3-compatible storage, private network, load balancer, and so on. Even though its cost is a little bit higher than DigitaOcean or others, it provides quite complete features on each service like the features of the load balancer that we will use in this post. Moreover, it actively publishes new features like the managed OpenSearch database published recently.

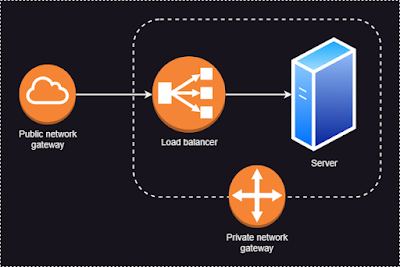

For instance, we will build an infrastructure to host a simple web server with an architecture like the following image. The server will be accessed by a domain name with an HTTPS connection.

Several services that will be deployed include.

- Private network and the router

- Server to host the web service

- Load balancer, including its backend and frontend rules

- Dynamic certificate for HTTPS

First, we define some variables required for some resources. We store it in variable.tf.

# basic variables

variable "upcloud_username" {

description = "UpCloud username"

type = string

}

variable "upcloud_password" {

description = "UpCloud password"

type = string

}

variable "upcloud_zone" {

description = "UpCloud zone"

type = string

default = "sg-sin1"

}

# networking

variable "my_router_name" {

description = "Basic network router name"

type = string

default = "basic-net-router"

}

variable "my_network_name" {

description = "Basic private network name"

type = string

default = "basic-net"

}

variable "my_lb_name" {

description = "Basic load balancer name"

type = string

default = "basic-lb"

}

# server

variable "server_hostname" {

description = "Server hostname"

type = string

default = "terraform.lukinotes.com" # change to your domain

}

variable "server_port" {

description = "Default web server port"

type = number

default = 8080

}

variable "server_private_ip" {

description = "Manual private IP address for the server"

type = string

default = "10.0.0.10" # it means we will need a private network with subnet 10.0.0.0/24

}

Second, we create a configuration file, config.tf, to set up our provider which is UpCloud.

terraform {

required_providers {

upcloud = {

source = "UpCloudLtd/upcloud"

version = "~> 2.0"

}

}

}

Third, we define networking and server resources in main.tf file. We also need to prepare the public certificates of our devices because we will use public key authentication for remote accessing our server through SSH connection. Our server will be attached to a public network, private network, and utility network of our account. We enable daily backup for our server because it is free on UpCloud.

provider "upcloud" {

username = var.upcloud_username

password = var.upcloud_password

}

# private network

resource "upcloud_router" "sample" {

name = var.my_router_name

}

resource "upcloud_network" "sample" {

name = var.my_network_name # required

zone = var.upcloud_zone # required

router = upcloud_router.sample.id

ip_network { # required

address = "10.0.0.0/24" # required

dhcp = true # required

dhcp_default_route = false # is the gateway the DHCP default route?

family = "IPv4" # required

gateway = "10.0.0.1"

}

}

# basic server

resource "upcloud_server" "sample" {

hostname = var.server_hostname

zone = var.upcloud_zone

plan = "1xCPU-2GB"

template {

storage = "Ubuntu Server 22.04 LTS (Jammy Jellyfish)"

size = 50

filesystem_autoresize = true

delete_autoresize_backup = true

}

simple_backup {

plan = "daily"

time = "1900"

}

network_interface {

type = "public"

}

network_interface {

type = "private"

network = upcloud_network.sample.id

ip_address_family = "IPv4"

ip_address = var.server_private_ip

source_ip_filtering = true

}

network_interface {

type = "utility"

}

login {

user = "root"

create_password = false

keys = [

"ssh-rsa abcabc pc1",

"ssh-rsa xyzxyz pc2"

]

}

metadata = true

labels = {

env = "dev"

owner = "luki"

}

# sample provisioning

user_data = <<-EOF

#!/bin/bash

echo "Hello, World!" > index.html

nohup busybox httpd -f -p ${var.server_port} &

EOF

}

Next, we define the load balancer and its components including frontend, backend, and certificates. We store it in loadbalancer.tf file.

resource "upcloud_loadbalancer" "sample" {

configured_status = "started"

name = var.my_lb_name # required

plan = "development" # required

zone = var.upcloud_zone # required

# 0

networks {

name = var.my_network_name # required

type = "private" # required

family = "IPv4" # required

network = upcloud_network.sample.id

}

# 1

networks {

name = "public-net"

type = "public"

family = "IPv4"

}

}

# BE

resource "upcloud_loadbalancer_backend" "sample" {

loadbalancer = upcloud_loadbalancer.sample.id

name = "lb-be-sample"

}

## Attach our server as BE handler

resource "upcloud_loadbalancer_static_backend_member" "sample_1" {

backend = upcloud_loadbalancer_backend.sample.id

name = "lb-be-sample-1"

ip = upcloud_server.sample.network_interface[1].ip_address # private ip address

port = var.server_port

weight = 100

max_sessions = 1000

enabled = true

}

# FE http

resource "upcloud_loadbalancer_frontend" "sample" {

loadbalancer = upcloud_loadbalancer.sample.id

name = "lb-fe-sample"

mode = "http"

port = 80

default_backend_name = upcloud_loadbalancer_backend.sample.name

networks {

name = upcloud_loadbalancer.sample.networks[1].name # public network

}

}

## Redirect HTTP to HTTPS for default server

resource "upcloud_loadbalancer_frontend_rule" "sample_redirect_secure" {

# required

frontend = upcloud_loadbalancer_frontend.sample.id

name = "redirect-https"

priority = 60

# optional

actions {

http_redirect {

scheme = "https"

}

set_forwarded_headers {

active = true

}

}

matchers {

header {

name = "Host"

method = "starts"

value = var.server_hostname

ignore_case = true

}

}

}

# FE https

resource "upcloud_loadbalancer_frontend" "sample_secure" {

loadbalancer = upcloud_loadbalancer.sample.id

name = "lb-fe-sample-secure"

mode = "http"

port = 443

default_backend_name = upcloud_loadbalancer_backend.sample.name

networks {

name = upcloud_loadbalancer.sample.networks[1].name # public network

}

}

## Handle HTTPS request for default server

resource "upcloud_loadbalancer_frontend_rule" "sample_secure_serve" {

# required

frontend = upcloud_loadbalancer_frontend.sample_secure.id

name = "serve-http-default"

priority = 50

# optional

actions {

use_backend {

backend_name = upcloud_loadbalancer_backend.sample.name

}

set_forwarded_headers {

active = true

}

}

matchers {

header {

name = "Host"

method = "starts"

value = var.server_hostname

ignore_case = true

}

}

}

# dynamic certs

resource "upcloud_loadbalancer_dynamic_certificate_bundle" "sample_dyn" {

name = "sample-dyn"

hostnames = [

var.server_hostname

]

key_type = "rsa"

}

# attach certificate

resource "upcloud_loadbalancer_frontend_tls_config" "sample_secure" {

name = "sample"

frontend = upcloud_loadbalancer_frontend.sample_secure.id

certificate_bundle = upcloud_loadbalancer_dynamic_certificate_bundle.sample_dyn.id

}

Finally, we output some values that are necessary like the IP address and DNS name of the deployed load balancer. We need to add the load balancer address into the DNS record of our domain as a CNAME record.

output "public_ip_address" {

description = "Server IPv4 address"

value = upcloud_server.sample.network_interface[0].ip_address

}

output "loadbalancer_networks" {

description = "Public address of the load balancer"

value = [ for item in upcloud_loadbalancer.sample.networks : { dns_name = item.dns_name, type = item.type, name = item.name } ]

}

Then, we can run the following commands.

terraform init

terraform plan

terraform apply

Comments

Post a Comment